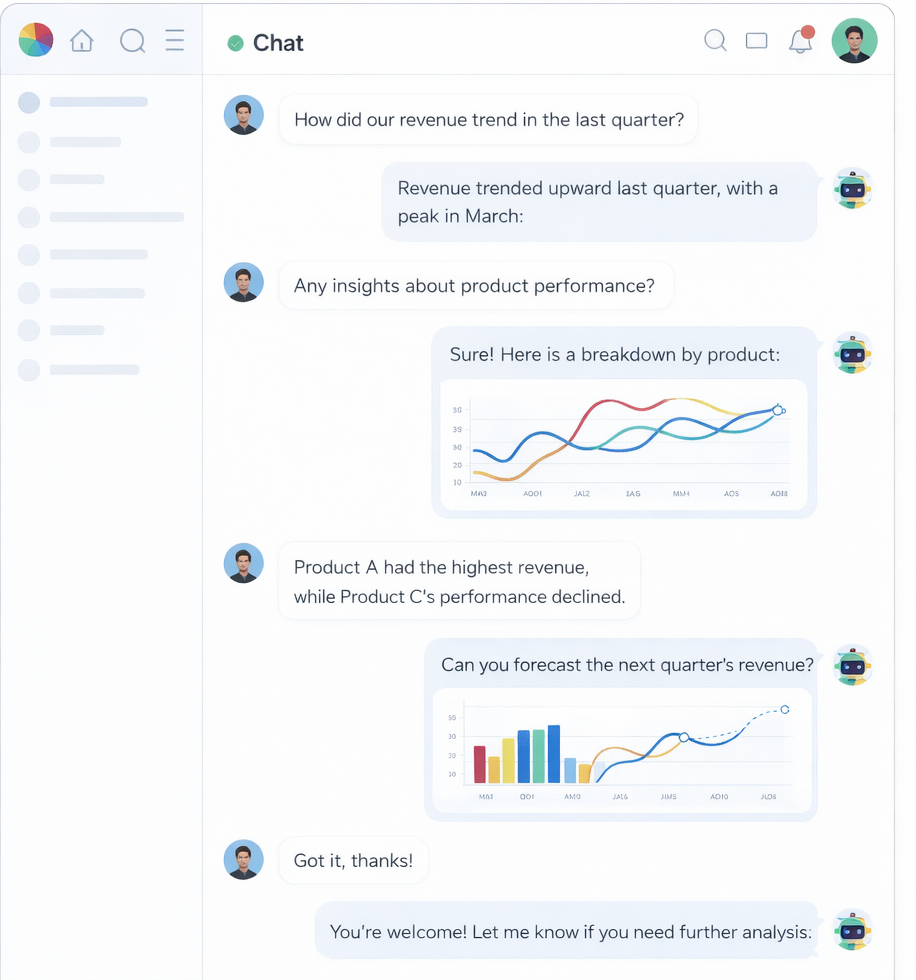

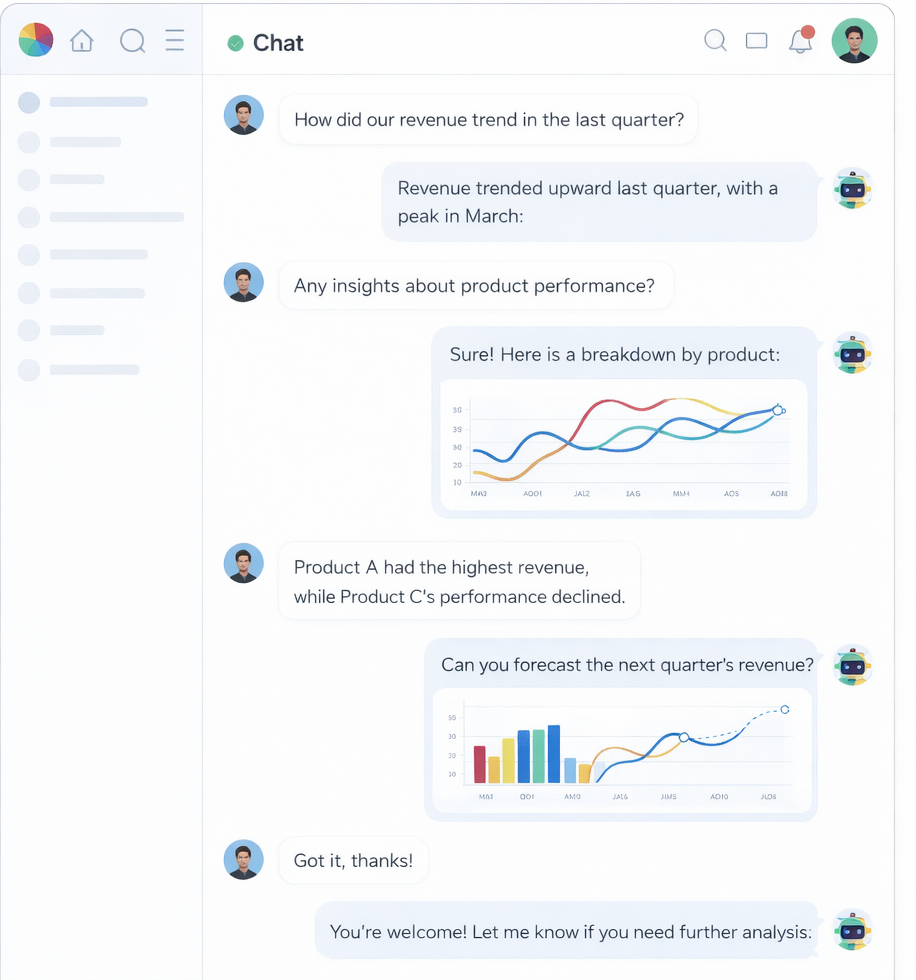

01 Conversations

A conversation surface that works like an analyst, not a chatbot.

Atlas handles multi-turn questions, selects the right tool for the job, and returns answers as text, tables, charts, dashboards, or reports from one thread.

AI data analyst platform

Glaukes helps teams query operational data in plain English, understand what changed, and turn answers into action without living in SQL or rigid BI dashboards.

Platform

01 Conversations

Atlas handles multi-turn questions, selects the right tool for the job, and returns answers as text, tables, charts, dashboards, or reports from one thread.

02 Data Sources

Glaukes connects directly to the systems where the business already runs, introspects schema automatically, and keeps query execution server-side for tighter control.

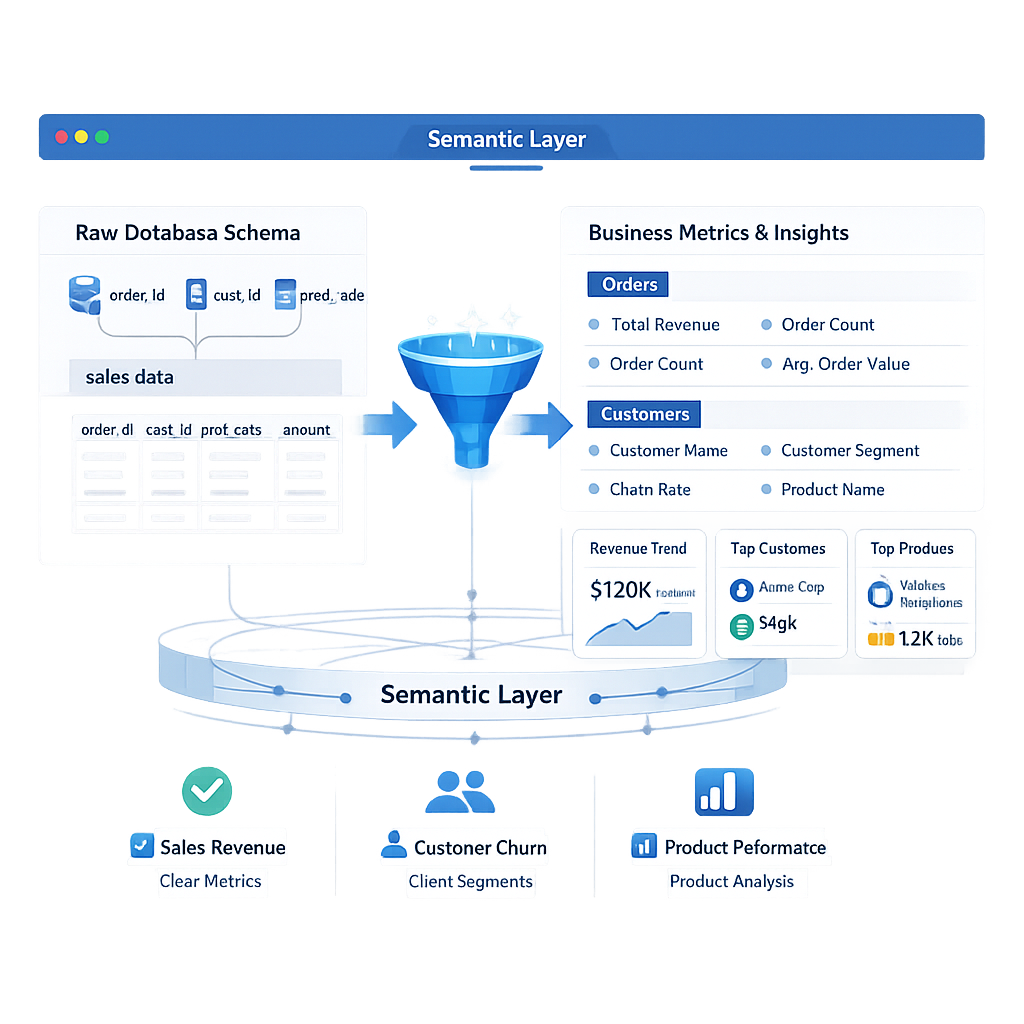

03 Data Map

The Data Map translates raw schema into business entities, metrics, and relationships so Atlas stops guessing and starts answering with consistency.

04 Automations

Save prompts as scheduled automations, deliver outputs on a cadence, and pin results into dashboards so insights become part of daily operations.

Capabilities

From approvals to merchant performance, teams can investigate live data without waiting on SQL support.

Bring together data sources like Postgres, Snowflake, BigQuery, and internal event streams in one workspace.

Shared definitions for KPIs and entities make answers consistent across finance, risk, and operations.

Save investigations, recurring reports, and team views once the right question has been answered.

Build live views from conversation output — charts, tables, and summaries that update as the data does.

Approvals, visibility rules, and auditability keep the experience usable in real financial operations.

Solutions

Reconcile settlements, monitor chargebacks, and track transaction volumes in natural language — no SQL backlog required.

Query patient intake timelines, billing cycles, and operational throughput with governed, role-aware access.

Track GMV, conversion funnels, and inventory health across channels without building a new dashboard for every request.

Investigate delivery performance, carrier cost variance, and route efficiency directly from the data in your warehouse.

Understand audience behavior, content performance, and subscriber churn through recurring automations that keep the whole team current.

FAQ

No. Glaukes never stores the data inside your databases. It connects live, executes read-only queries server-side, and returns results to your session. Only schema metadata (table names, column names, types) is cached to speed up query generation — never row-level data.

Atlas uses your schema metadata and, optionally, your Data Map (a semantic model you define) to construct accurate SQL. Credentials are encrypted and never sent to the LLM — only the structural context needed to produce the query is passed.

Glaukes supports PostgreSQL, MySQL, BigQuery, Snowflake, Redshift, SQL Server, SQLite, Supabase, and Clickhouse out of the box. CSV and Excel file uploads are also supported as temporary in-session data sources.

The Data Map is an optional semantic layer that maps raw table and column names to business-friendly concepts — entities, metrics, and relationships. Without it, Atlas still works well. With it, answers become more consistent and reliable because Atlas stops guessing terminology.

Yes. Automations let you save any conversation prompt as a scheduled analysis — daily, weekly, or on a custom cadence — and deliver the output to email, Slack, or a dashboard. No engineering work required.

Each workspace has role-based knowledge and data source scoping. You control which team members can see which data sources and which background context Atlas uses when answering. All queries are logged for audit review.

Glaukes routes through LiteLLM, giving you access to Groq, Google Gemini, DeepSeek, and local Ollama models. You can set a default model per workspace or switch models at the conversation level.

The platform is designed to run on your own infrastructure. The backend is a FastAPI service and the frontend is a Next.js application — both can be deployed to your own cloud environment with your own database credentials and LLM API keys.

Connect your warehouse, ask your first question, and get a real answer — without writing a single line of SQL.